Note: Just working with PySpark in this case, and only DataFrames are available. The connector utilized the DataStax Java driver under the hood to move data between Apache Cassandra and Apache Spark. This should be co-located with Apache Cassandra and Apache Spark on both on the same node.The connector will gather data from Apache Cassandra and its known token range and page that into the Spark Executor. The Apache Cassandra and Apache Spark Connector works to move data back and forth from Apache Cassandra to Apache Spark to utilize the power for Apache Spark on the data.

sbin/start-master.sh Information about the Apache Spark Connector INSERT INTO testing123 (id, name, city) VALUES (2, 'Toby', 'NYC') Įxport SPARK_HOME=”//spark-x.x.x-bin-hadoopx.x INSERT INTO testing123 (id, name, city) VALUES (1, 'Amanda', 'Bay Area') ĬREATE TABLE IF NOT EXISTS testing123 (id int, name text, city text, PRIMARY KEY (id)) We will use this keyspace and table later to validate the connection between Apache Cassandra and Apache Spark. For more information about non default configurations review the the Apache Cassandra documentation. apache-cassandra-x.x.x/bin to your PATH but this is not required. apache-cassandra-x.x.x/bin/cassandra //This will start Cassandra Also, feel free to reach out and add comments on what worked for you!

Hopefully, this works for you (as it did for me!), but if not use this as a guide.

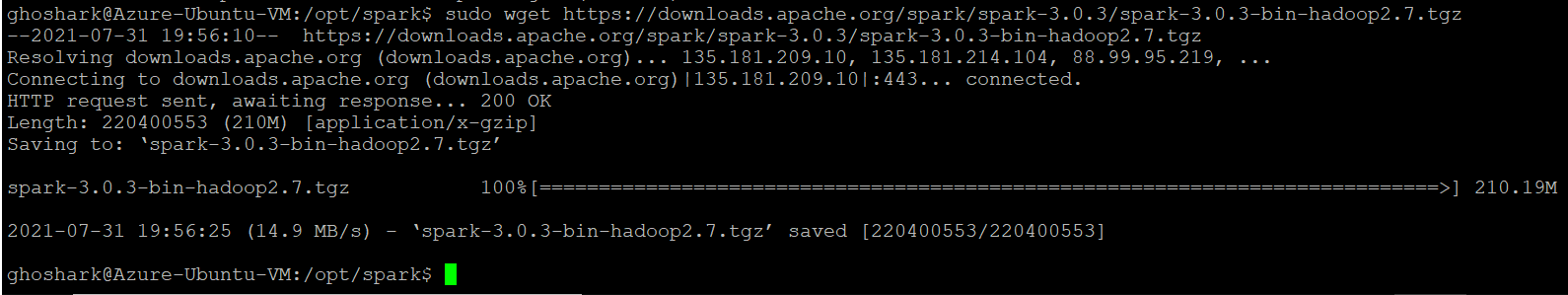

DOWNLOAD SPARK PACKAGES INSTALL

Note: With any set of install instructions it will not work in all cases.